What is a Customer Data Platform (CDP)? CDP Explained

Customer Data Platform (CDP) has become an important way to deliver analytics and insights to enhance customer experiences and monetization. In Salesforce’s latest “State of Marketing” report, 78% of high performers said they use a CDP, versus 58% of underperformers. In this blog, we’ll explain what a CDP is, how is it different from a data lake and an enterprise data warehouse, and what its different features and components are. We’ll also outline how you can get started with this journey, and how you can minimize your deployment times to maximize your ROI. What is a Customer Data Platform? A Customer Data Platform (CDP) is a software that enables you to collect customer data from a variety of sources, such as websites, mobile apps, social media, and customer support and commerce interactions. It then creates a complete profile of each customer. This profile includes details such as demographics, behavior, purchase history, and preferences. This data is then used to generate insights that then lead to personalized marketing campaigns, better customer experiences, and optimized sales strategies. In our view, a CDP has 5 distinct modules: Visualization of data: these are the traditional dashboards that allow users to slice and dice the data in various ways. AI models: The models in the CDP generate advanced level of predictive insights about scenarios such as customer behavior, content monetization, churn analysis, promotions uplift etc. What-if scenario analysis: This advanced visualization module is based on the outputs of the AI models. It allows you to answer questions and get clear insights for decision making. For example, “what will be the impact of this promotion for customer segment A”. Data repository: It’s common for a CDP to have its own data repository. However, for organizations with a highly mature data warehouse, this layer could be implemented in a federated fashion thus avoiding the need to permanently store data into the CDP itself. There are pros and cons to each approach. API integration: Since integration of insights is the biggest challenge facing organizations today, the significance of this layer cannot be overemphasized. This layer allows all the enterprise applications and content management systems to use the AI insights. How is a Customer Data Platform (CDP) different from a data lake? We had briefly covered the topic CDP vs Data Lake in our blog titled “AI Led Customer Data Platform (CDP) for Retail”. According to us, a CDP has an express purpose of leveraging insights to make decisions. It is designed to collect and unify customer data from various sources to create a unified customer profile. This profile and other data are then used by AI models to improve customer experiences, personalize marketing campaigns, and optimize sales strategies. On the other hand, a data lake is a repository that is the target destination for all types of data, including structured, semi-structured, and unstructured data, from different sources. While we can use a data lake for customer profile based advanced analytics and business intelligence, it is not built for that specific purpose. A data lake is intended to be very flexible and accessible for various data analytics purposes. So, while a CDP is created with specific analytical models in mind, a data lake is more generic and universal. It often serves to be the single source of data for the rest of the enterprise. How to get started with your Customer Data Platform (CDP) journey? Once you are ready for a CDP, you have a choice of implementing a proprietary CDP platform or you can choose the technology you prefer and use a customer data platform (CDP) accelerator. A CDP accelerator not only reduces your time to market to deploy a full CDP but also provides you with a few benefits: It has predefined AI models which you can simply test / train on your data and launch after making any adjustments. The What-if scenarios are hence also predefined The data schema that enables the models is also well-defined so it accelerates your data integration efforts Does not lock you into long term technology choices Thus, a CDP accelerator offers a flexible middle ground between enterprise build vs buy dilemma. As is usually the case, there are pros and cons of each approach, and a proper analysis should be carried out before making a decision. Read about our CDP accelerator for media here. Does a CDP address only marketing use cases? Contrary to popular trend, a CDP is not just for marketing or sales use cases. Since it is a hub of all data for a customer, use cases that support service and operations can also be implemented. For example, for retail companies, we could think of warranty analytics, service analytics, and so on. In addition, store personalization and demand analytics may also be a helpful use case. Hence, customer analytics may be a better term to describe a CDP’s purpose that marketing and sales analytics. Conclusion As the power of AI becomes more accessible, digital first organizations must embrace the concept of the CDP to enhance the effectiveness of customer analytics. The following are some key takeaways: Integration of AI insights into business applications using APIs must remain top of mind to maximize the business impact. A balanced coupling between the enterprise data lake and a CDP must be created. Data does not always have to be duplicated, and even if duplicated there are ways to provide the updates back to the source systems. See our blog on Domo connectors for that. Choice of platform ranges from custom to a licensed product. A CDP accelerator can offer a good balance. Make a choice after considering your requirements and tech landscape. Also read more blogs on CDP for Retail Industry and CDP for media.

How to choose the right Agile methodology for project development in a frugal way

Earlier, the role of technology was limited to be a mere business enabler. But the evolving business scenario now visions technology as the major business transformation element. This puts enterprises under tremendous pressure to build disruptive products with capabilities to transform the entire business world. The role of an efficient project management model for software development is crucial here to bring the right technology solutions at the right time. Traditional project development models such as Waterfall are too rigid and depend explicitly on documentation. In such models, the customers get a glimpse of their long-wished product/application only towards the end of the delivery cycle. Through continuous communication and improvements in project development cycle irrespective of the diverse working conditions, enterprises need to ensure that they get things done with the application development within the stipulated time without compromising on quality. Without an efficient delivery method, the software development team often comes under pressure to release the product on time. According to the survey done by Tech Beacon, almost 51% of the companies worldwide are leaning towards agile while a 16% has already adopted pure agile practices. While this statistic is in favour of agile for application development, there are some major challenges faced by both the service providers and customers while practising agile. Communication gap When the team is geographically distributed, communication gap is a common problem. In such a distributed agile development model, the transition of information from one team to another can create confusion and lose the essence of the context. An outcome-based solutions model with team members present at client locations can enable direct client interactions and prompt response between both the sides. Time zone challenges Another challenge that the client faces in a distributed agile development environment is the diverse time zones. With teams working in different time zones, it is often difficult to find out common work hours where every team is present at the same time. Through an outcome-based solutions model, the customers can stay relaxed and get prompt assistance during emergencies. Moreover, in such cases, the client stays updated about the progress of projects and iterations become easy. Cultural differences In a distributed agile team, the difference in work ethics, commitment, holidays and culture creates a gap between the development team and the customer. In situations like these, a panel of experts including ex-CIO’s and industry experts can be the helping aid to provide customers with valuable insights on current market trends and solutions to close any cultural gaps. Scope Creep Scope Creep is another issue faced by countless customers associated with agile teams working on software development projects out of multiple locations. Here, the missing pillar is a scrum master at offshore defining and estimating the tasks along with onsite representatives communicating every requirement from clients. A closer look at these challenges suggests the scope of a properly architected and innovative agile model to resolve these issues. Through a carefully devised agile strategy, it becomes quite easy for both the client and development sides to interact on a frequent basis and overcome the obstacles.

Conceptual Architecture of Customer Data Platform (CDP)

A customer data platform (CDP) is vital for businesses today. It brings together many dimensions of customer information so that a 360-degree understanding of a customer can be created and enriched with information from multiple sources. The final aim is to generate insights and AI models that can be used to drive better customer experiences, commerce (purchases, cross-sell) and operational efficiencies. However, enterprises often face the following two challenges: CDP programs often tend to become too complex leading to delays in returning value to the business. The systematically deployment of AI insights into operations as part of closed loop analytics remains a challenge for the industry. These challenges are a motivation for this post. The identification and implementation of a CDP does not have to be complex. In this post I wanted to present a bird’s eye view of some of the primary data and technical architectural components that business & technology leaders must think of as they embark on CDP programs – whether licensing a platform from a leading vendor or customizing an open-source product. Conceptual Architecture of Customer Data Platform – Explained Below A CDP can be thought of in terms of the following key functional blocks that span the overall data management lifecycle. It is helpful to think in terms of a functional architecture because it aligns the CDP more closely to its purpose. Let’s go bottoms up and build it up to enable an easier understanding of the multiple layers of Customer Data Platform Architecture. Level 0: The core data repository This is the core Customer 360 vision for a CDP. Ideally the development of this level should be aligned with the benefits that we wish to drive through AI and better insights aka personalization, audience analysis, cross-sell, better conversions, lower stock outs, decrease locked inventory, etc. This layer is often virtualized and consists of many functional blocks representing key data workstreams that will help us deliver the business objectives. For example, online clickstream data, transactions, purchase behavior etc. are often all part of this layer. To enable this comprehensive repository, the integration architecture must support both real time as well as batch ingestion mechanisms. At this level we also have the challenges of customer identity resolution and master data management. As with any data management program, creation of this stage means external and internal source management, multiple levels of staging and cleaning layers, data quality, data masking, and so on. Because of the inherent complexity of data management, this level can often overwhelm and dominate the scope of a CDP program. We must strategically define the (never ending) scope of Level 0 so that the business ROI can be generated in a timely manner. In other words, this layer must be incremental business value led. Level 1: Insights Generation Engine This architectural component is generally not considered as part of the core data repository but is critical to a CDP delivering the promised outcomes. Typically, the analytical use cases here are defined with a view to the desired business outcomes. For example, one of the most common insights to be generated is about customer segmentation. Any subsequent models must keep the segmentation in mind. The failure to keep segmentation front and center leads to sub-optimal outcomes. For example, we could be running a campaign based on incorrect segmentation thus leading to product cannibalization in the market, or we could be trying to fix an operational supply issue without clear insights on whether the issues disproportionately affect any specific customer segment. This level is also closely aligned with traditional business intelligence – the visualization of data according to the many slices and dimensions possible. Level 2: Program and Campaign Management This functional component of the CDP provides a structure to the campaigns and ensures that we do not create conflicts or overlaps between campaigns. This conceptual block is where most of the business facing programs are managed and executed. For example, we could be executing a cross-sell campaign and a product adoption campaign simultaneously, or premium brands could be running campaigns to the audience of a lower priced brand. So, this component, although not purely a technical one, serves as a key governance tool. This level also flows directly into the management reporting on campaign performance and attribution. We also often define the Level 0 or Customer 360 requirements at this level. That allows for the Level 0 layer to be built in an incremental and Agile fashion, with a view towards the business value expected. Level 3: AI Operationalization This functional block is the mechanics by which generated insights from Level 1 are integrated with either operational systems or customer engagement systems. Failure to consider this component of the CDP holistically is one of the primary reasons that AI programs often fail to be systematically utilized in production operations systems.; For example, as the insights on cross-sell targeting are generated and a campaign is kicked off, the extent to which this is automated and integrated with the eCommerce systems defines the success or failure of most CDP and AI programs. After all, this is the moment of truth. Insights implementation will depend on a variety of factors such as available budgets, technology constraints, or operational conflicts. However, partial versus full implementation must be analyzed and planned consciously. Technologically insights generated from AI are consumed and utilized via various means such as an API gateway. This level also serves to build in the reverse data feedback loops to feed into level 0. When this cycle is optimized, then the closed loop analytics mission is properly met. Level 4: Management Reporting The final component in the conceptual architecture of customer data platform (CDP) is the generation of insights for decision making and control. This is where we generate metrics on sales performance, product adoption, etc. This level also has the traditional components of exploratory data analysis and hypothesis so that new ideas can be generated. We cannot control and improve what we cannot measure. So, thinking of

Customer Data Platform (CDP) for Media: Three AI Use Cases

As media and entertainment becomes ubiquitous in our lives, there are multiple priorities unfolding for media companies and brands. They need to be innovating constantly to understand our preferences and behavior to make sure that their content is geared to reach the right people on the right channels and media. AI and big data are important drivers of this capability. In this blog we’ll outline three AI use cases in CDP that media companies should be implementing to stay ahead in digital customer engagement. To deliver the AI and analytics charter, a Customer Data Platform (CDP) becomes extremely important. So, in this blog we will explore the interlinkages between the two. What is a Customer Data Platform (CDP)? A Customer Data Platform (CDP) allows you to unify and manage customer data from multiple sources in a central location. Media companies can benefit greatly from the use of a CDP due to the large amount of first, second-, and third-party data that they receive. For example, clickstream data coming in from their web properties is an important source, and so is the advertising data that is sourced from the networks. Although it is a common deployment scenario, a CDP does not have to integrate directly with a lot of data sources. Instead, mature enterprises are consolidating their enterprise data into a data lake repository such as Snowflake, and then pulling data from there to do AI modeling, create dashboards, and perform what-if analytics in a platform such as Domo. CDPs can be implemented in different ways because depending on the data maturity of the enterprise, not all data is always in pristine condition in a globally centralized data lake. Why is AI important in a CDP Platform? One of the many reasons a CDP is implemented is because it focuses business and technology efforts on a narrow set of AI use cases. This ensures faster time to market. Today, traditional dashboards that provide a view into the past are not enough to understand the cause-and-effect analysis of the many variables in play. Decision making can be made better. The ability to implement AI has the potential to dramatically improve the overall effectiveness of your business intelligence initiatives because it can predict and prescribe. The ability to deploy a CDP quickly and deliver results is much enhanced if the CDP platform is capable of data ingestion, AI modeling, dashboarding, what-If analysis, and API integration of insights. Ignitho’s CDP accelerator has these components with the added advantage that it lets you maintain your investments in various technologies such as Snowflake, Domo, Microsoft, GCP, and AWS. Key Customer Data Platform (CDP) Use Cases for Media Personalization & Promotion Uplift In a crowded digital marketplace, it is important to personalize content recommendations and advertising for each user. By unifying data from different sources, such as website interactions, social media, multiple channels, and email statistics, a CDP helps you gain a holistic view of the audience segments and also at an individual level. You can now provide real time and personalized recommendations based on interests, preferences, and behavior. The insights from AI models in the CDP can be used directly in the customer experience systems to improve performance. Or they can be used for advanced what-if scenario analysis such as gauging the effect of an upcoming promotion being planned. Subscriber Retention & Content Affinity This is an important content monetization use case as publishers begin to experiment with introducing new content formats and packages with different prices. This can be done, for example, by analyzing changes in engagement levels or patterns. By identifying these customers early, we can take targeted actions such as offering personalized discounts or promotions. Using the API layer of the CDP, the insights from the AI models can be integrated right into the content management and promotion systems, thus improving overall responsiveness. Price Sensitivity If we understand customer behaviors and preferences, then we can identify potential churn risks early on. We can take targeted actions proactively and increase engagement. In addition to the ongoing A/B tests in engagement and adoption, a CDP can help analyze who is likely to churn given a price increase or change. These insights can be valuable, as we can then take proactive action to prevent this scenario from occurring and improve the retention considerably. It is often more difficult to reengage customers than to maintain or enhance their engagement. Which Supporting Capabilities to Examine? Implementing a robust Customer Data Platform (CDP) requires a combination of technological, operational, and strategic capabilities. Capturing Zero Party Data Zero party data is information that is intentionally shared by customers of their own accord. It is valuable because it can be used to deliver highly personalized experiences and campaigns. So digital capabilities that prompt customers for their input, feedback, and wish-lists are important to implement. Enterprise Data Fabric An enterprise data fabric aims to create and provide a unified view of data across an organization’s various applications and data sources. It helps break down data silos. As you look to implement a CDP and/or a data lake, creating a well-designed data fabric is important to maximize the ROI from an enterprise insights program. Identity Resolution CDPs rely on resolving and unifying customer identities across different channels and devices. This requires the ability to match and merge customer records based on common identifiers and data attributes. Data quality issues and inadequate analytics can often result from not being able to resolve identities efficiently. Privacy and Security A CDP should have robust privacy and security capabilities to protect customer data and ensure compliance with relevant regulations, such as GDPR and CCPA. This includes capabilities such as data encryption, access controls, and audit logging. Integration and interoperability A CDP should be able to integrate with other marketing and technology platforms, such as marketing automation tools, CRM systems, and data management platforms, to enable seamless data exchange and campaign orchestration. Summary An AI-powered CDP (Customer Data Platform) can help media companies monetize the vast amounts of data

Utilizing SASE for Improved Cybersecurity in Enterprise Applications

What is SASE in Cybersecurity? Secure Access Service Edge (SASE) is the next step in cybersecurity. It is a rapidly emerging practice that seeks to unify disparate security practices into a cohesive cloud delivered model. What do we mean by that? Today, most enterprises have a variety of deployment models for their applications, data and end user devices. To address this topology, a variety of security practices have been followed starting from securing local networks in the physical building, addressing mobile devices, and securing multiple business applications in the cloud. SASE seeks to simplify this model by delivering verification with a Security-as-a-Service model on the cloud. Moreover, the SASE framework does not assume a centralized service. Instead, it is assumed that the security should be verified at the edge – closer to where the users are. That gives rise to higher performance while raising the security levels. Gartner predicts that by 2024 almost 40% of all enterprises will have a concrete plan to move to the SASE model. So SASE is here to stay. Key Components of SASE framework in Cybersecurirty The following are some key components of the SASE framework. As you can see, they are already been done to varying extents. So, SASE is really just a more conscious evolution of cybersecurity practices: Zero Trust As we move to the cloud, it is apparent that traditional definitions of trust will also change, perhaps for the better. In the Zero Trust model, there is a continuous verification, aka authentication and authorization. Contrast that with traditional approaches that used a “trust but verify” model. For example, if you are inside a physically secured building, you are assumed to have clearance. In the Zero Trust model, that vulnerability is addressed through verification barriers based in roles, usage, and other parameters. This continuous verification in the zero trust model is done through a variety of techniques including monitoring of logs and usage patterns. As a result, the impact radius of a breach is presumably going to be reduced as access will be restricted as close to a security event as possible. A zero trust based model will also reduce human errors and latencies in case authorization and authentication parameters change for applications, individuals, and their devices. Firewall as a Service (FWaaS): As applications have moved to the cloud, traditional firewalls have evolved into being offered on the cloud. However, as complexity of the applications, use of third-party SaaS services, and user access patterns changed due to remote and mobile access, firewalls had to emerge too. The firewall as a service model simply means that all traffic needs to be routed through a cloud-based firewall which can make the right decisions depending on the context of the use and the latest policies. Just as in the zero-trust model, this has significant advantages over the traditional fragmented means of protecting access. Software Defined Wide Area network (SD WAN): SD-WAN allows organizations to manage the network from a central location instead of relying on traditional wide area network (WAN) technologies like MPLS. The deployment is simplified because SD-WAN allows administrators to set policies and manage the network from a central location. The use of software to manage and control the network also eliminates the need for proprietary hardware, reducing cost and complexity. SD-WAN is an essential part of the SASE framework. Central Control Tower The central control tower is necessary for centralized management and a single point of control over network and security functions. It is responsible for enforcing security policies, providing visibility into network traffic (for monitoring), and automating network deployment (such as SD-WAN) and other security functions. Using a control tower simplifies overall security operations and reduces the time taken for decision making by making sure that the necessary data is available centrally and in real time. Next Steps The move to SASE (Secure access service edge) is the next logical thing in streamlining and evolving your cybersecurity maturity. It is a crucial capability to protect your business from both bad actors and operational oversight. Get in touch today for an audit of your applications and cybersecurity landscape. We’ll help you develop a practical roadmap towards SASE adoption and recommend the software platforms to adopt.

AI Led Customer Data Platform (CDP) for Retail

A Customer Data Platform (CDP) for retail helps retailers build a 360 degree view of their customers. This is important to improve customer experience and enhance commerce activity. In this blog, I wanted to highlight the difference between a CDP and a data lake, and then outline some key use cases in retail that you should pursue a CDP for. CDP vs Data Lake – The Difference Since they both are meant to ingest and store large amounts of data, are data lakes and CDPs the same? It turns out that the key difference is in the intent of the storage. A data lake is meant to be a store of unprocessed data on which desired business intelligence will be built as needed. Typically, we have seen data streams from all parts of the enterprise sent directly to the data lake. The idea has been that we should capture everything that we can because we never know what special insights a piece of data can hold. Once we have all the data coming in, then we can sift through it and extract the insights that we need. A CDP on the other hand is created with a deliberate purpose. The data is ingested after we have defined what we need based on the kind of analytics we want to build to serve a specific purpose. Since we are no longer looking for a needle in a haystack, arguably the time to create a CDP is much shorter. On the other hand, by being laser focused and building incrementally, there is always the possibility that we are leaving something of value out during the data integrations. For this reason, generally, data lakes will also co-exist in an enterprise. Indeed, the purpose of a CDP is often the same business intelligence scenarios and AI use cases that a data lake would enable. But given the way it’s conceptualized a CDP provides quicker ROI. You could also think of a data lake powering a CDP as one of the many architectural approaches we may follow. In both cases, Domo provides ready connectors to a variety of systems including online commerce, clickstream data, and transactional information from systems such as fulfilment systems and the ERP. These connectors allow us to expedite the time to market significantly. They also assist in performing systematic identity resolution so we can build holistic customer profiles. What does a Retail CDP look like? Our approach is to initiate a CDP program by clearly defining the purpose of the CDP. Then we select the right cloud platform, the right sources, the right Domo connectors, outline the Domo BI engine, and deploy the right AI modeling. This helps us make sure that we deliver ROI as soon as possible to the business while keeping the path open for incremental value development. The 5 layered customer data platform architecture program outlines our thinking on this. For example, as we started to define our own Retail CDP solution as a Domo App, we started by speaking with clients about the top use cases they were looking for. The following 3 use cases surfaced as high priority. There is a Retail App that is provided by Domo already on their marketplace. It does a great job of demonstrating how dashboards can be deployed quickly to start yielding ROI. In this blog we’ll extend that concept to include AI and other uses as well. Marketing Use Case for a CDP With so much going on in the marketing technology with content management, dynamic pricing, churn prediction, and advertising on multiple channels, it has become imperative for brand managers and marketers to maximize the value of their martech investments. A CDP is the perfect complement to the martech stack because it can not only provide aggregated information of a customer or a segment, but it can also quickly deliver predictive, and often prescriptive insights through AI to positively assist the customers in their journeys. Intent-based targeting and analytics is a great use case to get the customers to ultimately complete their purchase. Using drop off and journey data available to us, combined with customer demographic data, we can not only encourage customers to take action, but more importantly we can improve customer journeys and address objections better. For example, we helped a client pinpoint channel conflicts between multiple brands by effectively using multiple sources of data. By anonymizing and bringing the promotions data of multiple brands in one place, we were able to ensure that the customer segments were updated to reflect the brand positioning of the various products – premium vs value was the easiest one. In the absence of this analytics, the brands were not only realizing lower ROI on their spend, but they were also causing brand confusion in the minds of consumers. Another great use case is of omni-channel commerce: when customers drop off online, we could continue the journey in the store and vice versa. Even when online, retargeting is a great way to keep customers engaged. A complementary technique is to use intent data and segmentation to predict recommendations on what customers might want next. Today’s recommendation engines are getting better but there is still a lot that can be improved. Domo provides connectors to a variety of marketing systems which accelerate the time needed to deploy effective analytics capabilities. Store Analytics & Pricing Use Case In any retail business which has multiple locations, it is important to keep track of store performance and the factors that drive it. It is also critical to understand how the pricing changes and promotions can influence commerce activity. The readymade Domo retail app does a great job of providing you with dashboards around these important metrics. It has a ready dashboard that is powered by a well-defined schema. All we have to do is to ingest the data. Ignitho’s solution builds on top of this solution by providing AI powered insights. This enables you to take prescriptive actions. For example, one of the AI models is for customer

Improving Data Governance with MS Purview to Deliver Better AI

Every large enterprise faces tremendous challenges with management of data, maintaining compliance, and identifying the sources of truth for better business performance. Often, it is not feasible to store and manage everything centrally as the application portfolio evolves constantly and business moves at different speeds across the enterprise. Entropy is real and a very good thing to have. So, in this blog I wanted to introduce a solution from Microsoft to address this need. Microsoft Purview is a comprehensive solution to help us deliver a bird’s eye view of data across the enterprise. Create unified data maps across a diverse portfolio Microsoft Purview allows us to create integrations with on-premises, cloud, and different SaaS (Software as a Service) applications in your portfolio. All we need to do is to set up the right integrations with the right authentication & authorization. Purview does the rest. The data can then be classified using custom or predefined classifiers. This is an especially useful exercise to understand how data is fragmented and stored across multiple systems. As we map out sensitive data elements, it then becomes easier to identify sensitive data. This identification has many real-world use cases including: Compliance with data privacy and masking rules Regulations around data domicile Compliance with GDPR (General Data Protection Regulation) In almost any enterprise technology transformation program, the importance of identifying the golden source of data becomes critical. Often, these data mappings are kept in offline documents and sometimes even as latent knowledge in our minds. However, as the application portfolio evolves, this becomes incredibly difficult to manage. A solution such as Microsoft Purview provides immense benefits in providing a simpler way to manage data lineage and discoverability. The ability to use business friendly names allows even business users to search and determine where data elements are located and how they are flowing. In addition, technology impact analysis becomes much easier when we know how a change can affect the data map. Microsoft Purview also has a feature for data sharing. If that is feasible with your implementation, then that is an excellent mechanism to share data between organizations and maintain the data lineage visibility. AI & Analytics Improvements It is well known that almost 50-70% of the effort in a typical AI program is spent on aggregating, reconciling, and cleaning up data. Adopting Microsoft Purview helps reduce the source of these complex and time-consuming issues to a greater extent. Data pipelines are easier to examine, refine and build if we can be sure of the data lineage, systems of record, and the sensitivity of data. And the resulting aggregation is more usable with higher data quality. We can also better avoid inadvertent compliance breaches as we ingest the data for our analytical models. As the need for AI increases, and as teams of data scientists change frequently, a systematic solution becomes important. The sharing feature of Microsoft Purview can also be used to aggregate data that can be used for analytics. Potentially this can transform the way we have traditionally thought about data aggregation and integration into destination data stores. Next steps I hope I was able to bring out the benefits of using Microsoft Purview clearly. Visibility into data across your digital real estate Mitigates compliance risks Accelerates the ROI (return on investment from AI and analytics programs That said, it is a subscription service from Microsoft so the right business case and use cases should be built before adoption. In addition, like with any new initiative, there are many topics such as security, collections hierarchy, authorization, automation etc. that merit a deep dive before adoption. If you want to explore data governance and Microsoft Purview further, please get in touch for a discovery session.

How to Implement an Enterprise Data Framework

In this blog I’ll describe a solution to an urgent priority affecting enterprises today. That question is “How to implement a closed loop analytics framework that can be scaled as the number of use cases and data sources increase?”. This is not an easy problem to solve because by its very nature, any technology implementation gets outdated very quickly. However, thinking in terms of the entire value chain of data will help channel this inevitable entropy so we can maximize the value of our data. The 5 Stages of a Closed Loop Data Pipeline Typically, when we think of developing a new application or digital capability, the focus is on doing it well. That implies we lay a lot of emphasis on agile methodology, our lifecycle processes, devops and other considerations. Rarely do we think about these two questions: What will happen to the zero and first party data that we are about to generate as part of the application? And (to a lesser degree) what existing insights can the new application use to improve the outcomes? As a result, the integration of data into the analytics AI pipeline is often accomplished as part of separate data initiatives. This according to me is a combination of data strategy and data operations stages of the closed loop analytics model. Stage 1 From a data strategy perspective (the first stage), understanding the value of zero-party and first-party data across all parts of the enterprise, and then creating a plan to combine relevant third-party data is critical. It defines how we adopt the capabilities of the cloud and which technologies will likely be used. It also helps us create a roadmap of business insights that will be generated based on feasibility, costs, and of course benefits. Finally, feeding this data strategy consideration into the governance of software development lifecycle helps unlock the benefits that enterprise data can deliver for us. Stage 2 The second stage which is closely linked is data operations. This is the better-known aspect of data management lifecycle and has been a focus of improvement for several decades. Legacy landscapes would use what are called ETLs (batch programs that map and transfer data) into different kinds of data warehouses after the data has been matched and cleaned to make sense. Then we implement various kinds of business intelligence and advanced analytics on top of this golden source of data. As technology has progressed we have made great strides in applying machine learning to solve the problems of data inconsistencies – especially with third party data received into the enterprise. And now we are moving to the concept of a data fabric where applications are plugged straight into an enterprise wide layer so that latencies and costs are reduced. The management of master data is also seeing centralization so that inconsistencies are minimized. Stage 3 Stage 3 of the data management lifecycle is compliance and security. This entails a few different things such as but not limited to: Maintaining the lineage of each data element group as it makes it way to different applications Ensuring that the right data elements are accessible to applications on an auditable basis Ensuring that the data is masked correctly before being transmitted for business intelligence. Ensuring that compliance to regulations such as GDPR and COPPA is managed Encryption of data at rest and in transit Access control and tracking for operational support purposes As is obvious, the complexity of compliance and security needs is not a trivial matter. So I find that even as the need for AI and Customer Data Platforms (CDPs) has increased, this area still has a lot of room to mature. Stage 4 Stage 4 is about insights generation. Of late this stage has received a lot of investment and has matured quite a bit. There is an abundance of expertise (including at Ignitho) that can create and test advanced analytics models on the data to produce exceptional insights. In addition to advanced analytics and machine learning, the area of data visualization and reporting has also matured significantly. From our partnership with AWS, Microsoft and a host of other visualization providers such as Tableau, we are developing intuitive and real time dashboards for our clients. However, I believe that the success at this stage has a lot of dependency on the first 2 stages of data strategy and data operations. Stage 5 Stage 5 is another area that is developing in maturity but is not quite there. This stage is all about taking the insights we generate in stage 4 and use them to directly improve operations in an automated fashion. From my experience I often see 2 challenges in this area: The blueprint for insights operationalization is still maturing. A logical path for a cloud native organization would be feed these insights as they are generated into the applications so that they can result in assisted as well as unassisted improvements. However, because of the lack of these automated integrations, the manual use of insights is more prevalent. Anything else requires large investments in multi-year tech roadmaps. The second challenge is due to the inherent entropy of an organization. New applications, customer interactions, and support capabilities must constantly be developed to meet various business goals. And as data strategy (stage 1) is not a key consideration (or even a feasible one during implementation), the entropy is left to the addressed later. Summary The emergence of AI and analytics is a welcome and challenging trend. It promises dramatic benefits but also requires us to question existing beliefs and ways of doing things. In addition, it’s also just a matter of evolving our understanding of this complex space. In my view, stage 1 (data strategy), stage 3 (compliance and security) are key to making the other 3 stages successful. This is because stage 2 and stage 4 will see investments whether we do stage 1 and 3 or not. The more we think about stage 1 and 3, the more will our business benefits be amplified. Take our online assessment on analytics maturity and

Building the Cognitive Enterprise with AI

Enterprise Cognitive Computing (ECC) is about using AI to enhance operations through better decision making and interaction. In fact, this is how the impact of AI is maximized by directly influencing operational efficiency and customer experience. Implementations of enterprise cognitive computing are a natural combination of digital, data, and enterprise integration. That’s simply because the insights we generate must reach the target application points, and then they must be used by digital means. In this blog we will outline a few real-life use cases and then discuss the key enablers of Enterprise Cognitive Computing. Use Cases Customer Experience One of the key focus areas for Ignitho is helping media publishers engage customers better. As business pressures mount, the need to generate new revenue models and provide customers with highly personalized experiences is becoming vital. Given the large trove of zero and first party data, enterprise cognitive computing can generate the contextual information needed to provide unique experiences to customers. In addition, while the traditional analytics use cases have been to show customers what they have seen before and liked, perhaps differentiation can be created by capturing appropriate zero party data to do something different and hyper personalized to an audience of one. These stated customer requests captured as zero-party data can then be channeled into the algorithms to present a better overall experience, all the while monitoring customer receptiveness and continuously refining their journeys by improving our interactive digital capabilities. Operational Improvements Improvements in natural language processing (NLP) have opened the door to substantial efficiency improvements. For example, incoming customer remarks and comments are often parsed manually to a high degree. Now, better AI capabilities (NLP) and integration with enterprise systems to build full context will allow increased levels of automation. Not only does this reduce costs, but it also increases customer experience significantly. Similarly, improvement in automated fraud detection and suggestions of next best actions are resulting in customer service teams becoming much for efficient and empowered. As a result of enterprise cognitive computing, our customer interaction channels can be more responsive. They can also be made more interactive through the use of conversations agents such as chatbots. Key Enablers of Enterprise Cognitive Computing While being AI led is the core of Enterprise Cognitive Computing, it is really a strategic combination of AI, data management, enterprise integration & automation and digital engineering. Artificial Intelligence (AI) : Advances and implementation of AI techniques is no doubt the tip of the iceberg and critical to the success of enterprise cognitive computing capability. With advances in natural language processing (NLP) and machine learning, the data and context of operations can be much better understood and acted upon. We have an abundance of talent that is leading to creation of advanced analytical models for various uses, testing them, and generating insights for almost every area of business across the customer lifecycle – attracting, acquiring, servicing, and retaining. Data management: This can be considered to be the backbone of enterprise cognitive computing. Successful implementation of AI needs high-quality, diverse, and consistent data. Modern data management techniques such as building a Customer Data Platform (CDP) to creating enterprise-wide data pipelines are helping organizations to cope with the enormous amounts of data being generated. Ignitho has a comprehensive 5 step data management framework to address this issue. Digital Engineering: You can consider digital engineering to be the channels through which insights are propagated to the systems of engagement. These could be conversational agents on the website or plugin within portals that allow customer service or operations to work better and faster. As digital tools and technologies become more widely available, selecting those that meet the needs of your enterprise in the best way possible is crucial. Through its product engineering offering aided by its innovation labs. Ignitho can rapidly enable experimentation with emerging tech along with microservices and API-based architectures to promote agility and composability. Integration & Automation: Consider this as the plumbing of your enterprise. To create robust data pipelines or a data fabric, it should be possible to create an enabling architecture. Enterprise integration enables that to happen. These integration techniques allow for applications to communicate with each other without having to build non-scalable, point to point integrations. In addition, automation techniques such as Robotic Process Automation can bridge the time to market gap for new capabilities. That’s because while technological remediation of gaps in the business process (e.g. sending data automatically to another application) can be delayed because of costs and other factors, the intended results can still be achieved through a judicious use of RPA (robotic process automation). An Exciting Future Enterprise Cognitive Computing promises highly contextual experiences at scale. In order to tap into the full potential of this innovation, we must think strategically in terms of the entire gamut of data and technology enablers needed. In our Data Analytics Assessment here, we cover the end-to-end life cycle, so feel free to check it out and see where you stand.

Unlock the Power of AI for Business

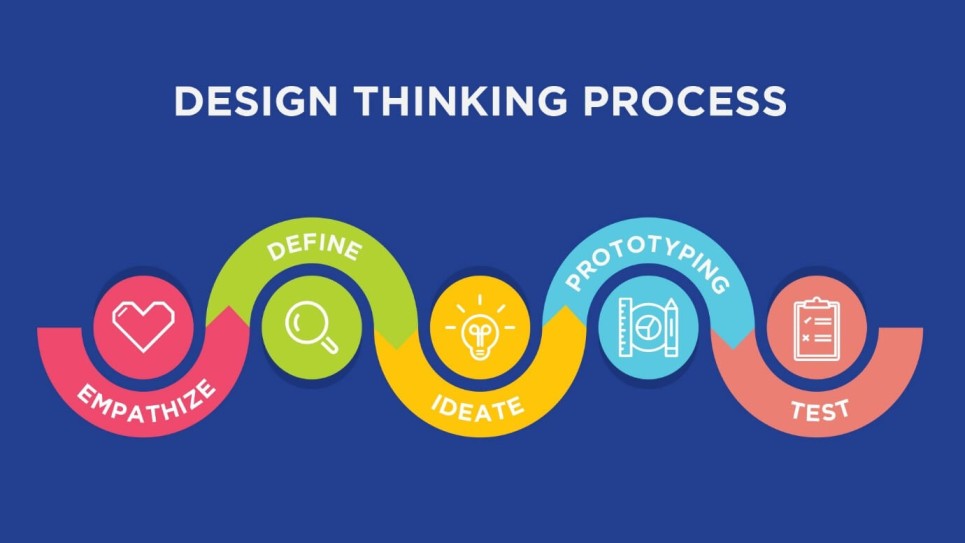

I recently wrote a Forbes article on leveraging the power of AI for your business. In this article, I highlighted two specific items from a broader framework to accelerate the generation of value. The first is the application of design thinking to analytics and AI initiatives. I explained using a realistic use case why it is vital to step back and understand not only the problem we are trying to solve, but also consider whether the solution truly meets the needs of users. In addition, it’s also important to think about the future business strategy and how our technological solution will support that desired direction. The second principle was that of creating closed loop analytics to amplify the ROI of your investments. I mentioned constant improvements of the data sources used for insights generation, and also the actual incorporation of insights into operations. These problems are not trivial so a robust data architecture and data pipeline model is important to set up. To provide a fuller perspective, in this blog I wanted to briefly outline the overall framework for data science that we follow at Ignitho. This framework truly allows us to bring alive our mission of using human-centric engineering and AI to our clients. The framework has 2 parts: The data science loop The underlying principles Together these 2 parts help us unlock the potential of AI for our clients. The article further adds to our journey of igniting thought through Ignitho’s strong focus on AI driven human centric digital engineering and thought leadership in digital innovation and transformation. This comes on the back of Garner’s study on Business Composability and CIO’s increasing dependence on AI for an accelerated business growth – Ignitho’s strong expertise in the area. Enterprises and service providers often struggle with their data cycle management which needs a holistic view and not just restricting within the constraints of the service agreements. Data cycle management when backed by an efficient design thinking principle continues to dominate the AI and Data Science space, keeping the users at the centre of business growth and increased efficiency in business operations. Design thinking and end-to-end data framework generally do not get discussed by enterprises – a major phenomenon that is engulfing enterprises, not for good. In my experience and in sync with what we continue to do at Ignitho Technologies, one needs to close the loop between the following 5 step Data Lifecycle: Data Strategy – Leverage decision insights from data derived out of a solid foundation Data Ops – Create resilient and effective data pipelines Compliance and Security – Reduce risk of data loss and privacy from the ground up Insights Generation – Generate insights that are not just predictive but also prescriptive Insights Operationalization – Making sure the insights are internalized into the right business processes The closed loop Data Lifecycle Framework works as a self-sustaining model with set of processes and constant optimization. But how does one ensure that this Data Lifecycle is brought to life? The value add of bringing the above framework to life is powered by Design Thinking – asking the right questions at the right level and focussing on internal and external user needs. Design thinking when done right, evaluates the customer’s point of view, while also considering the goals and objectives that the business itself needs to accomplish. Not sure how to take the first step towards accelerating your AI adoption? Here’s our short Online Analytics Maturity Assessment which has been appreciated by CXOs across enterprises for the eye opener it has been for them. We are sure it will help you gauge your organisations AI adoption, do give it a try. The results are realtime across 5 dimensions and you can compare how your organisation scored over others. We would love to know your feedback. We are at the cusp of new opportunities coming our way and our POD based tribes led by our CTO, Ashin Antony continue to scale up as experts in AI enabled human centric engineering. We are excited about this journey of igniting thought. Do let us know your feedbacks and queries.